This post is republished from the Chronosphere blog. With Chronosphere’s acquisition of Calyptia in 2024, Chronosphere became the primary corporate sponsor of Fluent Bit. Eduardo Silva — the original creator of Fluent Bit and co-founder of Calyptia — leads a team of Chronosphere engineers dedicated full-time to the project, ensuring its continuous development and improvement.

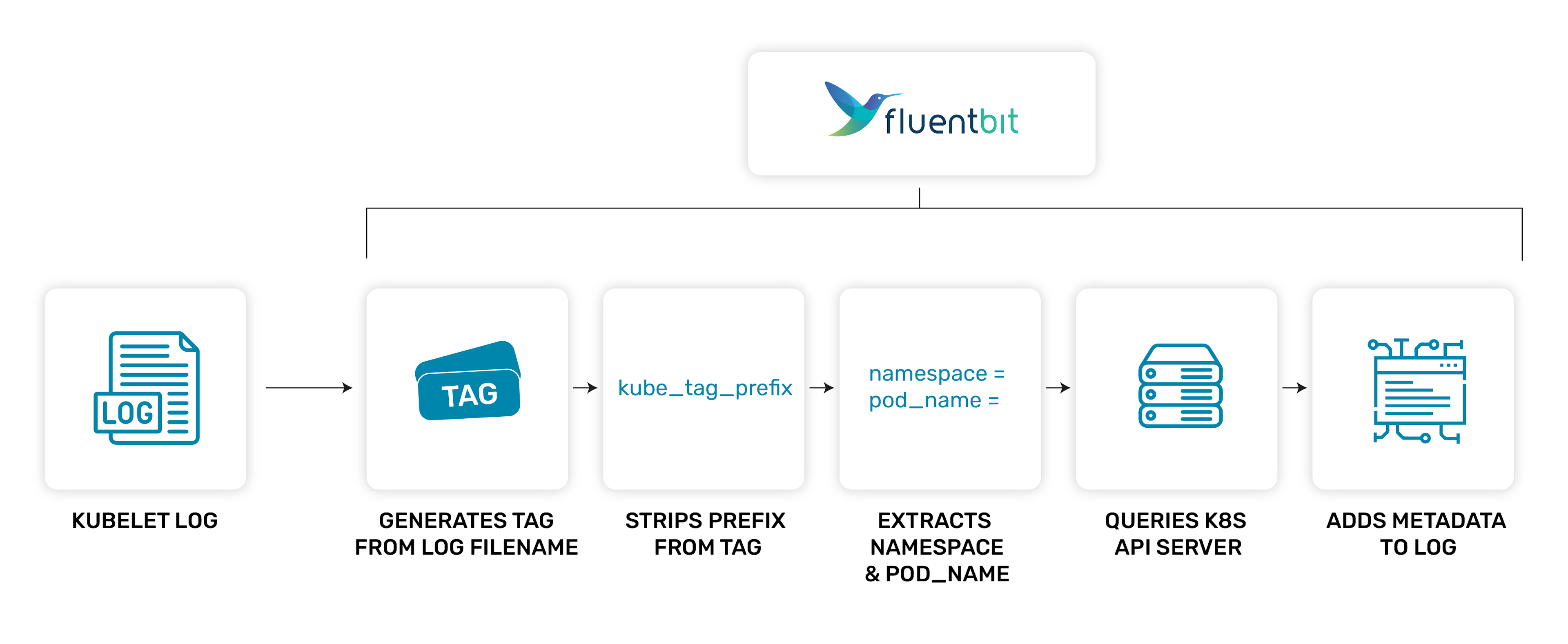

When run in Kubernetes (K8s) as a daemonset, Fluent Bit can ingest Kubelet logs and enrich them with additional metadata from the Kubernetes API server. This includes any annotations or labels on the pod and information about the namespace, pod, and the container the log is from. It is very simple to do, and, in fact, it is also the default setup when deploying Fluent Bit via the helm chart.

The documentation goes into the full details of what metadata are available and how to configure the Fluent Bit Kubernetes filter plugin to gather them. This post primarily gives an overview of how the filter works and provides common troubleshooting tips, particularly with issues caused by misconfiguration.

How to get Kubernetes metadata?

Let us take a step back and look at what information is required to query the K8s API server for metadata about a particular pod. We need two things:

- Namespace

- Pod name

Cunningly, the Kubelet logs on the node have to provide this information in their filename by design. This information enables Fluent Bit to query the K8s API server when all it has is the log file. Therefore, given a pod log file(name), we should be able to query the K8s API server for the rest of the metadata describing the pod.

Using Fluent Bit to enrich the logs

First off, we need the actual logs from the Kubelet. This is typically done by using a daemonset to ensure a Fluent Bit pod runs on every node and then mounts the Kubelet logs from the node into the pod.

Now that we have the log files themselves we should be able to extract enough information to query the K8s API server. We do this with a default setup using the tail plugin to read the log files and inject the filename into the tag:

|

|

Wildcards in the tag are handled in a special way for the tag filter. This configuration will inject the full path and filename for the log file into the tag after the kube. prefix.

Once the kubernetes filter receives these records, it parses the tag to extract the information required. To do so, it needs the kube_tag_prefix value to strip off any redundant tag or path to leave just the log filename with the three things required to query the K8s API server. Using the defaults would look like this:

[FILTER]

Name kubernetes

Match kube.*

Kube_Tag_Prefix kube.var.log.containers.

Fluent Bit inserts the extra metadata from the K8s API server under the top-level kubernetes key.

Using an example, we can see how this flows through the system.

Log file:

/var/log/container/apache-logs-annotated_default_apache-aeeccc7a9f00f6e4e066aeff0434cf80621215071f1b20a51e8340aa7c35eac6.log

Gives us a tag like so (slashes are replaced with dots):

kube.var.log.containers.apache-logs-annotated_default_apache-aeeccc7a9f00f6e4e066aeff0434cf80621215071f1b20a51e8340aa7c35eac6.log

We then strip of kube_tag_prefix:

apache-logs-annotated_default_apache-aeeccc7a9f00f6e4e066aeff0434cf80621215071f1b20a51e8340aa7c35eac6.log

Now we can extract the relevant fields with a regex:

(?<pod_name>a-z0-9?(?:.a-z0-9?)*)_(?<namespace_name>[^_]+)_(?<container_name>.+)-(?<container_id>[a-z0-9]{64}).log$

- pod_name = apache-logs-annotated

- namespace = default

- container_name = apache

- container_id = aeeccc7a9f00f6e4e066aeff0434cf80621215071f1b20a51e8340aa7c35eac6

Misconfiguration woes

There are a few common errors that we frequently see in community channels:

- Mismatched configuration of tags and prefix

- Invalid RBAC/unauthorised

- Dangling symlinks for pod logs

- Caching affecting dynamic labels

- Incorrect parsers

Mismatched tag and tag prefix

The most common problems occur when the default tag is changed for the tail input plugin or when a different path is used for the logs. When this happens, the kube_tag_prefix must also be changed to ensure it strips everything off except the filename.

The kubernetes filter will otherwise end up with a garbage filename that it either complains about immediately or it injects invalid data into the request to the K8s API server. In either case, the filter will not enrich the log record as it has no additional data to add.

Typically, you will see a warning message in the log if the tag is obviously wrong, or with log_level debug, you can see the requests to the K8s API server with invalid pod name or namespace plus the response indicating there is no such pod.

|

|

This example was created using a configuration file like below for the official helm chart. As you can see we have added two characters to the default tag prefix (my) and you can see above in the details for the error that the name of the pod has two extra characters in the prefix: it should be fluent-bit-cs6sg but is s.fluent-bit-cs6sg, no such pod exists so it reports a failure. Without log_level debug you just get no metadata.

|

|

Invalid RBAC

The Fluent Bit pod must have the relevant roles added to its service account that allow it to query the K8s API for the information it needs. Unfortunately, this error is typically just reported as a connectivity warning to the K8s API server, so it can be easily missed.

To troubleshoot this issue, use log_level debug to see the response from the K8s API server. The message will basically say “missing permissions to do X” or something similar and then it is obvious what is wrong.

|

|

In the example above you can see without log_level debug all you will get is the warning message:

|

|

Dangling symlinks

Kubernetes has evolved over the years, and new container runtimes have also come along. As a result, the filename requirements for Kubelet logs may be handled using a symlink from a correctly named pod log file to the actual container log file created by the container runtime. When mounting the pod logs into your container, ensure they are not dangling links and that their destination is also correctly mounted.

Caching

Fluent Bit caches the response from the K8s API server to prevent rate limiting or overloading the server. As a result, if annotations or labels are applied or removed dynamically then those changes will not be seen until the next time the cache is refreshed. A simple test is just to roll/delete the pod so a fresh one is deployed and check if it picks up the changes.

Log file parsing

Another common misconfiguration is using custom container runtime parsers in the tail input. This problem is generally a legacy issue as previously there were no inbuilt CRI or docker multiline parsers. The current recommendation is always to configure the tail input using the provided parsers as per the documentation:

|

|

Do not use your own CRI or docker parsers, as they must cope with merging partial lines (identified with a P instead of an F).

The parsers for the tail plugin are not applied sequentially but are mutually exclusive, with the first one matching being applied. The goal is to handle multiline logs created by the Kubelet itself. Later, you can have another filter to handle multiline parsing of the application logs themselves after they have been reconstructed here.

What’s Next?

- Join the Fluent Slack Community: To learn more about Fluent Bit, we recommend joining the Fluent Community Slack channel where you will find thousands of other Fluent Bit users.

- Check Out Fluent & Calyptia On-Demand Webinars: Our free on-demand webinars that range from introductory sessions to deep dives into advanced features.

- Learn about Calyptia Core: Finally, you may also be interested in learning about our enterprise offering, Calyptia Core, which simplifies the creation and management of telemetry data pipelines at scale. Request a customized demo or sign up for a free trial now.