v1.6.0

We provides the means for the collection, organization and computerized retrieval of knowledgeand Lightweight Data Forwarder for Linux, BSD and OSX. We are proud to announce the availability of Fluent Bit v1.6.0.

October 9, 2020

KNOWLEDGE BASE

Release Notes v1.6.0

Fluent Bit is a Fast and Lightweight Data Forwarder for Linux, BSD and OSX. We are proud to announce the availability of Fluent Bit v1.6.0.

For people upgrading from previous versions you must read the Upgrading Notes section of our documentation:

https://docs.fluentbit.io/manual/installation/upgrade_notes

Changes

Fluent Bit v1.6 is the next major release and include several improvements:

Community Updates

Fluent Bit v1.6 release comes with exciting news from the community. The number of Cloud providers and end-users adopting and contributing back to Fluent Bit is continuously increasing, this is totally reflected into the project quality and new feature sets:

Core

The following are the most visible changes for end-users:

- Properly handle of SIGTERM signal, graceful shutdown.

- On JSON formatting, handle duplicated keys, preserve the last one.

Tail Input (local files)

The are three major changes on this release for Tail input plugin, it now effectively follow files from the end like tail -f. In previous versions it always read them from the beginning. For opened files at start, it follows from the end, for newly discovered files at runtime or after rotation, it follows the files from the beginning. If you want to use the older mechanism you can enable the new option available read_from_head.

Other performance improvements has been implemented when using the db option (database file). The synchronization mechanism has been changed to normal by default and changed the default journal mode. This is a big performance improvement for most of the users.

If you want to increase performance event more when using a database file, we have introduced the option db.locking (default: false) that specify that the database file is exclusively for Fluent Bit use, reducing the number of system calls required, at the cost of blocking any external connection to the database file.

If you are a Windows user, now you can enjoy for large files support.

Amazon S3 & Kinesis Firehose Outputs

Amazon has done a great contribution on this major release, they contributed two new plugins plus other important improvements in existent components, list of changes below:

- New plugin for Amazon S3

- New high performance core plugin for Amazon Kinesis Data Firehose

- The AWS Metadata filter now supports more types of metadata, including VPC ID

- All AWS outputs now support custom STS endpoints, enabling you to use a VPC endpoint for STS]

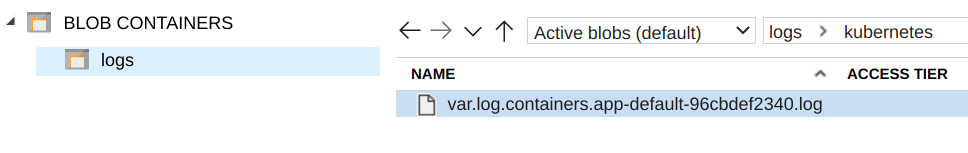

Microsoft Azure Blob Output

In a joint work with Microsoft Azure team, we created the new Azure Blob output plugin. This connector is flexible enough to be used with BlockBlob or AppendBlob types. In addition it can be used with custom end-points, providing a default connectivity with Azure service but optionally it can be used with Azurite Emulator for local testing.

Learn more about this new output here:

https://docs.fluentbit.io/manual/pipeline/outputs/azure_blob

TensorFlow Lite Filter

The new filter Tensorflow allows running Machine Learning inference tasks on the records of data coming from input plugins or stream processor.

Tensorflow Lite is a lightweight open-source deep learning framework that is used for mobile and IoT applications. Tensorflow Lite only handles inference (not training), therefore, it loads pre-trained models (.tflite files) that are converted into Tensorflow Lite format (FlatBuffer).

Learn more about this new filter here:

https://docs.fluentbit.io/manual/v/master/pipeline/filters/tensorflow

Lua Filter

The Lua filter has been improved in two main areas:

When a script receives a timestamp, by default it get it in a Floating point number, but when returning the modified record the extact precision is likely lost. For users that requires exact timestamp handling we have introduced a new configuration property called time_as_table, when enabled, the script receives the timestamp in a Lua table with the following structure:

timestamp['sec'] = EPOCH SECONDS

timestamp['nsec] = NANOSECONDS

when returning the value the timestamp will not lose precision.

Learn more about our Lua filter here:

https://docs.fluentbit.io/manual/v/master/pipeline/filters/lua

Elasticsearch Output

The Elasticsearch Output plugin now increases the default size of the response buffer to 512k and it supports nanosecond timestamp precision.

Learn more about this plugin here:

https://docs.fluentbit.io/manual/pipeline/outputs/elasticsearch

Kafka Output

There are cases where the buffered messages ready for delivery cannot be flushed if the Kafka Broker is not fast enough, this local buffering is handled by rdkafka.

The Kafka output connector now exposes a new configuration property called queue_full_retries which indicate the connector the limit of retries to enqueue the data into rdkafka. If the process fails by default 10 times, it issue a full retry to Fluent Bit engine.

note: setting this value to 0 is possible if you desire unlimited enqueue retries.

Learn more about this plugin here:

https://docs.fluentbit.io/manual/pipeline/outputs/kafka

PostgreSQL Output

Our PostreSQL output connector landed in v1.5 series and now in addition to Postres it got compatibility with CockroachDB. The new option cockroachdb is available in the configuration (default: off).

Learn more about this plugin here:

https://docs.fluentbit.io/manual/pipeline/outputs/postgresql

Stackdriver Output

The new version of the Google Stackdriver plugin, supports monitored resource ingestion from the log entry, in addition it comes with correction of project id usage.

If you are upgrading from a previous version please review the Upgrade Notes:

https://docs.fluentbit.io/manual/installation/upgrade-notes

Learn more about this plugin here:

https://docs.fluentbit.io/manual/pipeline/outputs/stackdriver

Contributors

On every release, there are many people involved doing contributions on different areas like bug reporting, troubleshooting, documentation and coding, without these contributions from the community, the project won’t be the same and won’t be in the good shape that it is now. So THANK YOU! to everyone who takes part of this journey!

Join us

We want to hear about you, our community is growing and you can be part of it!, you can contact us at:

- Slack: http://slack.fluentd.org

- Mailing list: https://groups.google.com/forum/#!forum/fluent-bit

- Github: http://github.com/fluent/fluent-bit

- IRC: irc.freenode.net #fluentbit

- Twitter: @fluentbit

Latest Version

New release on May 30, 2025

Fluent Bit v4.0.3 is out!

Check out the Release Notes, read the Updated Documentation or jump directly to the Downloads Section.

We are part of a wide community, no vendor lock-in.